~ Welcome to #thebalance 47 ~

Woah, mid August already?? Busy times. A hurricane of change is upon me these days. Massive momentum in both my personal life and work life - if those are even separate ‘lives’ anymore, ha - mostly good, some negative, lots uncertain.

Its exciting overall. Trying to find that ‘life fluidity’ as I call it. Stay tuned for updates.

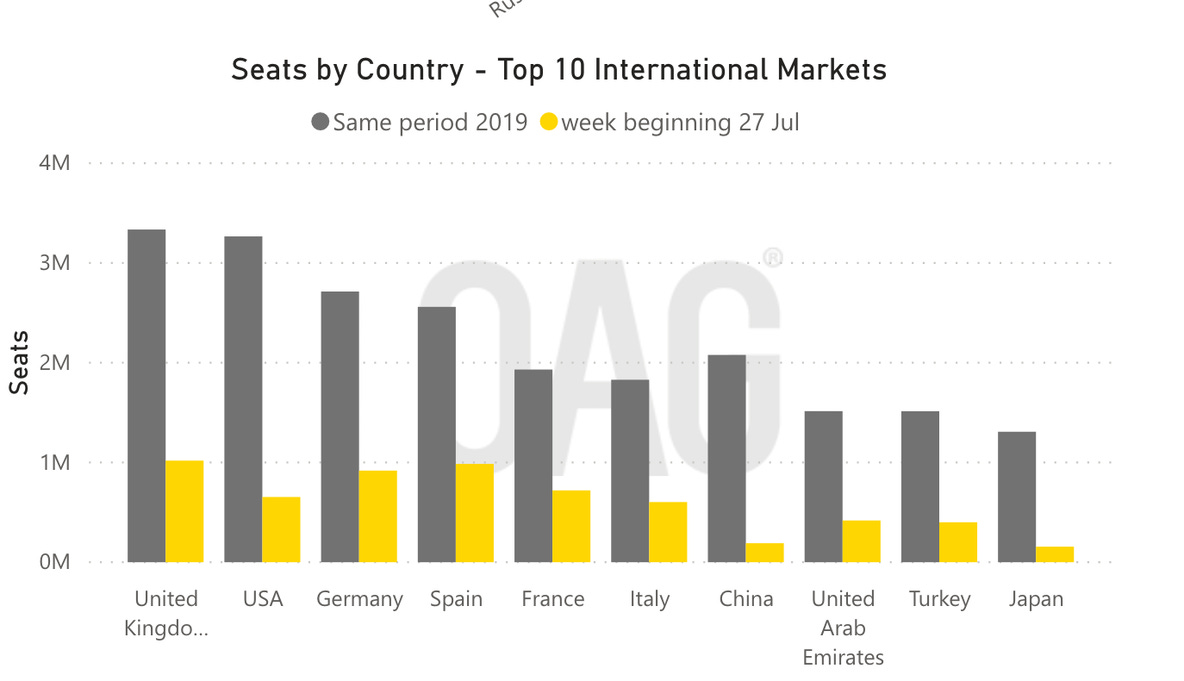

>> Be sure to check out the flight data chart in #thinkingthings (keeping it light this week) - pretty wild stuff! Jumping right in….

❤️ Likes, shares, and ‘my contacts’ adds are appreciated! It helps others discover what they have been missing out on while ensuring gmail doesn’t junk this thing either :)

⬇️ #thinkingthings, #followerthings, and #otherthings ⬇️

____________________________________________________________________________

🤔🤔 #thinkingthings

🟥 >>>> Borders Rule Everything Around Me

We talk a lot around here about COVID as the great accelerator for technology and innovation. Most of the time, I try to highlight ‘tech for good’ examples.

You know, positive change for humanity and all - given a lot of the bad shit we are constantly inundated with from the news on a regular basis. Well, this one is a topic not many are talking about and one I think worth highlighting… a ‘tech for bad’ example as a result of the COVID pandemic or, as the article suggests ‘tech-enabled terror capitalism’ use case.

This is the bad tech stuff that you will read about below will lead us to a dystopian future, like the kind you see in movies (think Minority Report) - in some places, this tech is already here, well practiced and widely used in places like China for population control.

It no doubt raises a ton of interesting questions for the future of humanity and global travel, including one of the core questions from all of us in the West… how will western democracies be equipped to mitigate the use of these technologies to protect our freedoms as citizens?? A highly relevant question of our time.

How technologies of population control are becoming techniques of pandemic response

Already, the pandemic has generated a host of technological solutions bidding to become routinized biometric enforcement mechanisms, including drones to enforce social distancing, quarantine ankle monitors (as are often used on parolees), contact-tracing apps for phones, and fever-scanning drones.

Startups like Feevr, which markets fever-detection scanners and “smart” thermometers, have been quick to enter the pandemic surveillance space. Others companies eager to exploit the pandemic opportunity, like Clearview AI and Palantir, are familiar names. Palantir has already implemented “data trawling” models and proprietary algorithms to help law enforcement conduct immigration raids at workplaces and separate families at borders.

Mijente, a Latinx activist organization, has documented how the infrastructure and cloud computing services provided by companies like Palantir (and Amazon, Salesforce, Dell, Microsoft, and others) makes the deportation and detainment of immigrants possible. This approach is being applied to pandemic track-and-trace programs. Companies like IBM, Facebook, Apple, and Google rapidly developed plans for managing community transmission, and public health websites have tracked the movement and online activity of those who had tested positive for Covid-19, often to an alarming degree.

Documents obtained by OpenDemocracy and Foxglove found that the U.K. National Health Service has given Palantir access to the health data of millions of people for a track-and-trace scheme, but the contracts show that Palantir will also be permitted to train other models with this data. Initially, the deal was made for a pound, for three months — which might indicate that the real value of the contract was in the data Palantir would gain. After this three-month period expired, Palantir extended the contract and set its price at £1 million.

>> …And that’s just a sample of COVID-induced monitoring technologies. The article continues with broader context and questions we should be thinking about…

In the aftermath of Hurricane Sandy in 2013, tenants in an affordable housing complex in New York City found that their building now had a facial-recognition scanner installed at various entrances. It seemed as though the landlords hadn’t consulted anyone in the building, and later there was a dispute about whether or not the installation was even legal. Residents told Gothamist in 2019 that they felt like “guinea pigs.” At the Atlantic Plaza Towers apartment complex in Brooklyn in 2018, facial-recognition cameras sprung up overnight in the building, which is rent-stabilized and in a rapidly gentrifying area. A spokesperson for Nelson Management Group, which manages the building, said that they were merely doing it “to create a safer environment for tenants.”

In the European Union, the Dublin Return regulation — which states that asylum seekers must apply for asylum in the first country they reach — has often used biometric data to identify and deport individuals who sought to make it to another country where they may have family or face more favorable conditions.

In Bangladesh, the Rohingya population — a Muslim minority group fleeing ethnic cleansing and violence in Myanmar — have been subject to intensive data collection and monitoring, both from the Bangladeshi government and the UN High Commissioner for Refugees. The UNHCR created an app that would collect refugees’ information, including photographs, and the Bangladeshi government worked with a firm called Tiger IT to register people, taking their fingerprints along with data about their religion, birthplace, and parents’ names. The Bangladeshi Industry Minister has confirmed that this was done to “keep a record” of the population.

Despite the apparent humanitarian aims of tools of “intervention,” they become assimilated to extracting profit from marginalized groups and then repackaging for use in schools and offices

In each of these cases, a humanitarian crisis has been seized upon to introduce technological methods of population control, positioning vulnerable populations as a ready-to-hand test subjects, disenfranchised enough to be exploited without much recourse.

In a parallel to Naomi Klein’s idea of “disaster capitalism,” unforeseen events are use as a pretext to roll out interventions that might otherwise have been widely opposed, not only by private interests but also through partnerships between private companies (like Palantir) and public facing agencies, such as the World Food Program, under the auspices of institutions like the United Nations.

“Introducing untested technologies into unstable environments raises an essential question: When is humanitarian innovation actually human subjects experimentation?”

While these interventions may be well-intentioned — initially — this is not a sufficient bulwark against authoritarianism or exploitation. They feed into the idea that crises, disasters, unforeseeable events can be brought under the purview of technologies like biometric data collection or location tracking and so can be solved.

Often they set the stage for a wider deployment of risk-management technology against anyone deemed to be a threat (e.g. the minority Uyghur population in China, treated as de facto terrorists by the Chinese government). Despite their apparent humanitarian aims, they become assimilated to what Darren Byler and Carolina Sanchez Boe call “tech-enabled terror capitalism,” in which technological tools are used to extract profit from marginalized groups and then repackaged for use in schools and offices.

The humanitarian alibi has often been used to inscribe the logic of the security state onto vulnerable populations, as when biometric and surveillance technologies are imposed as a means of delivering aid to populations in crisis situations. In a 2006 report, for example, the United Nations High Commissioner for Refugees said that biometric technology like iris scanning and fingerprinting, heralded a “new direction in refugee registration.”

This was framed as a positive development, something that should theoretically benefit refugees on their path to a new life in another country. But as legal scholar Petra Molnar explains, these new technologies for monitoring, administering, and “controlling” migration are themselves largely unregulated and their implications in the long run are unknown; refugees and asylum seekers often bear the brunt of these technologies, serving as involuntary test subjects. They become one of the first populations to be subject to the kinds of control that these new technologies make possible.

In Governing Through Biometrics, Btihaj Anjana notes that when the management and control of our identities and movement is governed through our bodies, the body becomes like a password, albeit one we don’t set and can’t change, that sets inescapable limits on our access to people, places, and opportunities.

Not only is data about a particular individual — their location, their age, gender, temperature, income, mannerisms, time of entry, purchasing habits — understood as a means for predicting their future behavior, but also the data about their social relations: people that they associate with, the locations that they visit, and even the order that they visit them. This can all be used to assign a level of risk to that individual that is then inscribed at the level of the body, associated with biometric markers (one’s iris, one’s face, one’s gait, and so on).

In The Origins of Totalitarianism, Hannah Arendt called this the “imperial boomerang”: when interventions and policies tested abroad are brought back to the “heartland.”

The COVID pandemic has caused this boomerang to be tossed yet again. The pandemic presents an opportunity to create groups of “compliant” and “noncompliant” people out of thin air as new rules are imposed and new systems are deployed to enforce them. It allows for a sort of internal border control, mediated by redeployments of surveillance technologies that grant or withhold access and privileges to people.

But this collection and operationalization of biometric data — at the border, at the entrance to an office, on the floor of a warehouse — is not some neutral means for assessing the risk a given individual poses, with respect to Covid-19 or any other hazard, any more than biometric data in general simply represents the reality or perceived reality of someone’s identity. Such biometric forms of control replicate already existing biases about who must bear the brunt of surveillance technologies.

🟥 >>>> COVID Flight Tracker Data

Airline data I came across.. something to think about as we look to the future of travel.

2019 vs 2020, the number of flights is down 48% compared to August last year.

Man, you hate to see American Airlines doing the best out of the carriers in terms of seats sold tho…😭

📲🧑🏽🤝🧑🏻 #followerthings

📚⏯️🎤 #otherthings

I participated in this virtual meeting group called TED Circles last week with a bunch of strangers from around New York and, man, was it cool. These ‘Circles’ take place all around the world during, where everyone discusses the same theme, during the same month/timeframe, and then publishes the data for all to view. An eye-opening experience for me in many ways.

Very much unlike any other virtual gatherings I’ve had - it was a group therapy session, networking event, and educational seminar all in one. It was impactful and empowering. I felt comforted, yet vulnerable. Can’t say I have connected like that (digitally!) in quite some time with total and complete strangers.

This month’s converation theme was “How Change Happens” and I thought I would share the summary from our groups discussion below. If you’re interested, message me direct or click the above link to learn more about how to join the next one!

Change happens when we are kind to others and especially to ourselves.

Change is constant and nearly impossible to keep up with. Every generation is more progressive than the one before. Being young, you are often so full of self righteousness and believe you have it right, all the time. But as you get older and set in your ways, the younger generation will come with even more progressive ideas that will make your efforts seem pedestrian, and their ideas frighteningly radical. We need to be kind to our elders for the sins of their past and we need to be kind to ourselves for the mistakes that we've made and will make in an effort to do better.

Only when we accept that we will not get it right 100% of the time and exercise kindness towards others' faults and our own, can we truly move forward with collective, lasting change.

Change happens when you accept you're going to fail over and over again, but the success is in the trying.

Change happens when we learn to speak up on small things so that we have the muscle strength and confidence to speak up on big things. Noting deviations in ourselves, our friends, our organizations, and our society helps keep us on our desired trajectory.

Change happens when it’s coupled with grace because change is hard.

Change happens when we see the change makers are positive catalysts instead of negative nags. Whistle-blower carries a connotation of an unpleasant, unwanted, and unexpected sound. Reframing their actions as truth-telling accountability will help us be more open to what they have to say.

Change happens when the pain of our status quo is greater than the pain of changing it. That’s not to say it’s black and white...there’s a lot of grey and there will be tradeoffs when the change is made - aim for utilitarian tradeoffs.

———————————————

Stay safe out there. Peace and love to all y’all.

Curiously,

-Block

—

About me:

My friends call me Block. Minnesota born & raised, I now live and work in New York City.

I am endlessly curious and blissfully dissatisfied. I have a passion for new ideas, obsessed with all things technology, and am always seeking to broaden my perspective while striving for balance, of course.

I am an open finance enthusiast, futurist, investor, entrepreneur, builder, advisor, life long learner, hockey player, traveler, podcast addict, hip-hop head, e-newsletter junkie, event planner, and comedic-short producer. Follow me on Twitter here and Instagram here.

“Find a question that makes the world interesting.” - Paul Graham

About #thebalance:

Curating a wide range of balanced topics straight to your inbox. Hoping to pique your curiosity and burst your echo chamber along the way. Original posts occasionally.

If you enjoy #thebalance, please show some love on the interwebs: Tweet some support! or click below.